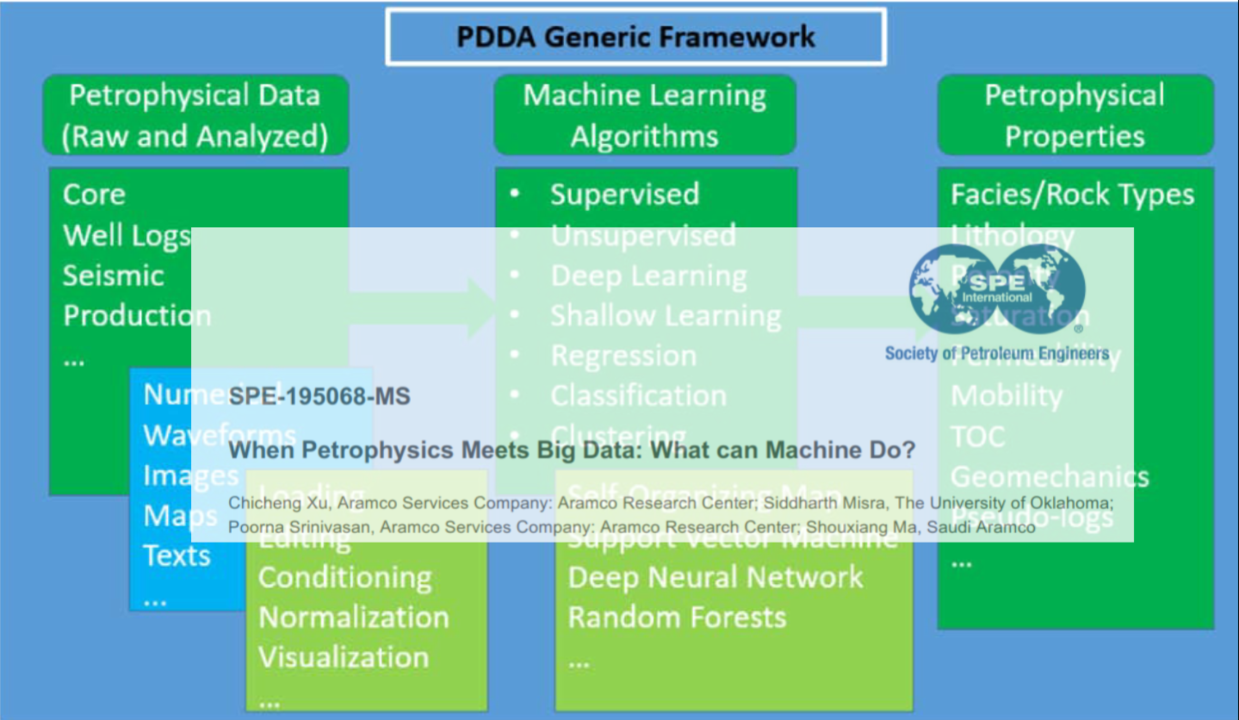

That is not the exact name of the paper. It actually is “When Petrophysics Meets Big Data: What can Machine Do?”, that was published recently in OnePetro. Link here.

It’s a very good paper. A must read. Applicable to all disciplines in petroleum engineering. But, is there really “Big Data”?

My only observation is how the authors struggle to explain data analysis in the context of “big data”. Big data is a misnomer and really doesn’t mean anything in science, or data science, for that matter. “Big” is a relative term. How do you define if your data is big? Is it 100 MB? Is it 100 GB? Is it 2 terabytes? Or 100 TB?

Machine Learning algorithms may work very well with small datasets, less than 10 MB. Other algorithms may fail even with 100 GB datasets. I think is not about the size but about the characteristics or singular features in the datasets.

Petroleum Engineers should stop using the term “big data” because does not pertain to the field of data science. It is speculative, non-scientific term.

Problems with data in the industry

The authors also bring up the issue of problems with the data in Petroleum Engineering. Besides (i) data quality, and (ii) limited data available, we should add also the absence of the reproducibility process in the publication of petroleum engineering papers.

A new SPE should be advocating for fully reproducible papers in this 4th Industrial Revolution, which means, papers should come with references to its dataset repository; the scripts that transform raw data in the final dataset and analysis; and a report fully based on literate programming practices.